Doppel Named Official Partner of the New York Knicks

Partnership to Showcase Doppel to Knicks Widespread Audience Through In-Arena, Digital and Out-Of-Home Assets

Learn how fake government websites steal fees and data, how to spot them quickly, and how to report, disrupt, and reduce the impact of real fraud.

A constituent is trying to renew a license, check a benefit status, pay a tax bill, request records, or fix something that feels urgent. They search. They click. The site looks official enough. The next thing you know, someone has paid a bogus fee, handed over identity data, or called a fake support line that keeps them on the hook until money changes hands.

Fake government websites are not a minor nuisance. They are a repeatable fraud channel that weaponizes public trust and high-intent searches. The harm shows up quickly as complaints, increased contact center volume, disputed payments, and reputational damage that cannot be fixed with a single warning page. For government entities, the “brand” is credibility. Attackers know that a believable seal and a calm checkout flow can extract money and identity data faster than noisy malware ever could.

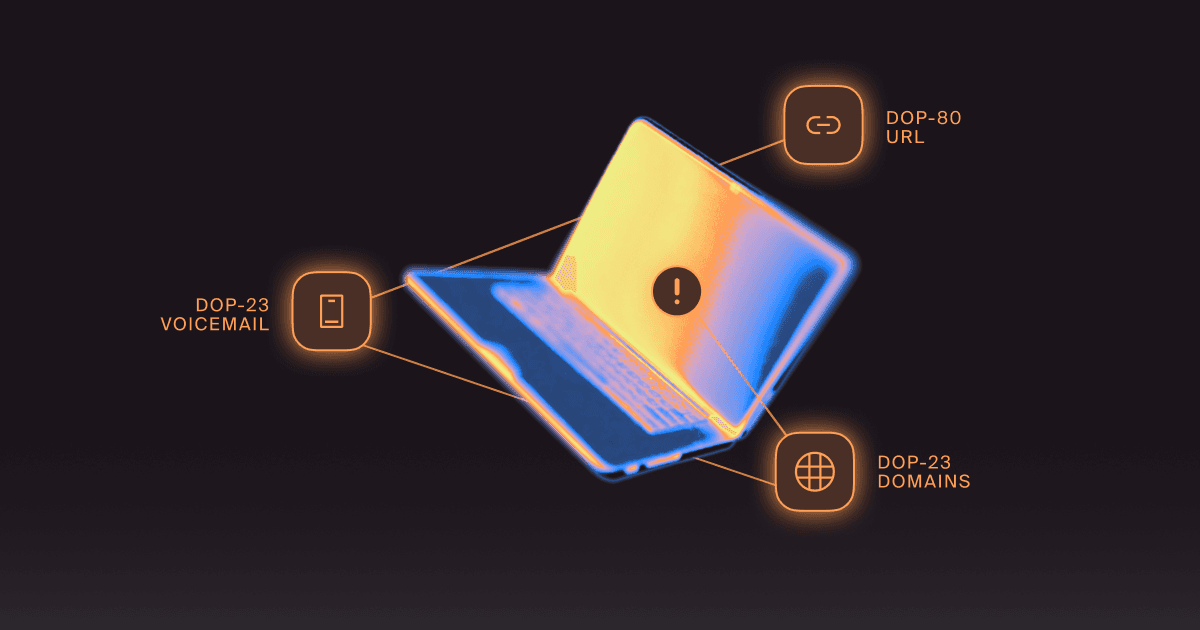

In the U.S., attackers usually cannot register a real “.gov” domain. So most scams rely on lookalike commercial domains, subdomain tricks, or compromised sites that redirect constituents into a fraudulent flow.

Fake government websites most often monetize through bogus payments, identity data capture, or callback traps that route victims to fraudulent support calls. The fastest detection comes from checking domains and flow signals such as forms, payment mechanisms, and phone prompts. The strongest response path runs in parallel: capture evidence, disrupt distribution, file takedown reports, and publish guidance for constituents that points to verified official channels. Long-term reduction comes from treating scams as repeatable clusters rather than one-off URLs.

Fake government website scams persist because they are fast to launch, cheap to rotate, and effective at capturing high-intent traffic. Attackers can quickly clone a service page, buy distribution through ads or seeded posts, and rotate domains and infrastructure when a campaign variant is flagged.

The bigger drivers are practical:

If you want to reduce impact, you need to break the funnel, not just remove a page.

They generally fall into three buckets. Each bucket has different signals and different response priorities.

These sites pretend to offer official services, then charge processing or filing fees that are not actually required.

What it looks like:

These sites collect sensitive PII or portal credentials that can be reused for identity theft, benefit fraud, or account takeover attempts.

What it looks like:

These sites exist to get a phone call, then use social engineering to extract money and data in real time.

What it looks like:

They work like a marketing funnel because that is exactly what they are.

Traffic acquisition: Attackers pull users from search ads, manipulated search results (SEO abuse or compromised-site redirects), social posts, QR codes on flyers, text messages, email lures, and forum or community threads that appear to offer “helpful” guidance.

Landing and trust-building: The page mimics agency branding, familiar language, and official-seeming structure. It often over-indexes on credibility cues: seals, badges, and procedural copy.

Conversion path: Users are pushed into a fast path: pay, submit data, or call. The site keeps navigation minimal because distraction reduces conversion.

Monetization and escalation: Payment scams take the money and disappear. Data harvesters sell or reuse the information they collect. Callback traps adapt in real time, upsell services, and keep victims engaged.

Rotation and repeat: When a site is flagged, the campaign is moved to a sibling domain with the same template, the same processor patterns, and sometimes the same phone number.

Check the domain and its structure before you do anything else, because it is often the cleanest early indicator.

Watch for:

Also watch for jurisdiction mismatch. A site claiming to handle a state or county service, but using generic “national” wording, no agency address, and no verifiable contact directory, is often a broker front or an outright scam.

A classic trick is putting an agency-sounding label as a subdomain on an unrelated domain, hoping the user only glances at the left side of the URL.

Attackers know people equate gov-like patterns with legitimacy. When they cannot use official naming conventions, they compensate with “gov” language, seals, and service keywords designed to pass a quick visual check.

Look for:

The page almost always reveals the scam model once you know what to scan for.

Legitimate government sites usually provide broader context, navigation, and accessibility elements. Scam sites often feel like a single-purpose checkout lane.

Common tells:

Some sites try to protect themselves with fine print that suggests they are “not affiliated” while still presenting the page as official. If the entire legitimacy rests on a disclaimer, treat it as hostile.

If the page displays an error state and offers an immediate fix via payment or a call, that is a conversion tactic, not a service.

The form and payment flow tell you what the attacker wants, and how quickly victims are likely to be harmed.

Red flags include:

Many scams rely on generic payment experiences that do not match government processes. You might see:

Some sites start small, then escalate:

Each step increases loss and makes victims feel committed.

Scams often deliver vague confirmation numbers and promises of follow-up, buying time before the victim realizes nothing is happening.

Callback traps turn a static web scam into a dynamic social engineering operation, thereby increasing both conversions and harm.

A live agent can:

If the site pushes a call, capture:

Phone-based fraud needs a response plan that includes telecom and contact center stakeholders, not just web takedowns.

They use distribution tactics that look like normal discovery, which is why they keep working.

Yes, often. Attackers buy ads against:

Even a short-lived ad run can generate meaningful revenue.

Some campaigns build thin pages stuffed with task keywords and location terms to rank. Others hijack compromised sites and redirect traffic.

It happens. A flyer at a community board or a QR code in a misleading notice can route victims directly to the scam page, bypassing careful URL checking.

Attackers post fake helpful links in comment sections and local groups, especially around deadlines or hot issues.

A fast response is a parallel workflow with clear owners, evidence standards, and a disruption goal.

Classify it immediately:

Collect:

Do not wait for hosting action to start reducing exposure:

Different targets respond to different evidence:

If your team needs a concise definition of what a scam website takedown actually involves, this Doppel overview is the right baseline

Give constituents a safe landing page and verified contacts, but do not boost the scam domain by linking to it.

It should be ruthless about speed and consistency.

To help prioritize which impersonation signals matter most, an impersonation risk assessment framework can make triage more repeatable.

If you cannot measure it, you cannot defend prioritization or budget, and you will keep reacting instead of improving.

Track:

Depending on your visibility, watch:

A takedown count alone is not enough. Track:

For teams building a broader response plan around these metrics, this is a useful framing of why impersonation response plans fail when treated like internal IT tickets.

Treat scam sites as a cluster, harden official discovery, and shorten the attacker’s profitable window.

Look for shared elements:

When you can connect assets, you can remove more than one domain at a time, and you can anticipate the next rotation.

Attackers exploit confusion. Reduce it by:

If reporting is hard, victims do not report. Provide:

Your contact center often sees the fraud first. Train them to capture:

If phone scams are a major vector, the vishing pattern overlaps heavily with these campaigns, and it is worth aligning web and phone disruption workflows.

The mistakes are usually operational, not technical.

This is a cross-functional incident. If legal, comms, web, fraud, and contact center teams are not aligned, responses become slow and inconsistent.

A domain can stay live while you cut its traffic by reporting ads and suppressing scam listings. Reducing exposure immediately reduces harm.

If you publish the scam URL verbatim in a highly indexed page, you can accidentally help it rank. Keep warnings focused on official destinations and scam signals.

If every takedown request starts from scratch, you will always be late. Build templates, checklists, and a minimum evidence bar that your team can hit quickly.

Fake government websites do not just target constituents. The same impersonation narratives often hit internal staff through phishing emails, fake vendor outreach, or phone-based support scams. When employees fail to recognize those narratives early, attackers gain credibility and reuse them against the public.

This is where Human Risk Management (HRM) strengthens external fraud defense. HRM programs test and improve how employees respond to real-world deception across email, SMS, and voice, then tighten verification and reporting workflows before a live campaign causes harm.

For public sector teams, that means:

Simulation and security awareness training should mirror the exact scams targeting constituents. When internal teams can spot impersonation patterns quickly, agencies reduce the likelihood that those same tactics succeed externally.

Agencies should prioritize continuous detection of lookalike sites, fast investigation workflows, and takedown support that quickly reduces victim exposure. For public sector teams managing constituent fraud across web, phone, and search channels, a purpose-built government-focused brand impersonation solution can centralize monitoring, evidence capture, and disruption workflows in one place. Learn how Doppel supports public sector agencies here

In practice, that means maintaining continuous external monitoring for lookalike domains and scam sites, correlating related infrastructure into campaigns, and pushing those cases through a consistent investigation and takedown workflow. If you want a practical discussion of how reporting and evidence routing work for brands, this is a solid read.

Constituent fraud is a race. Teams win by detecting earlier, disrupting distribution faster, and running a repeatable investigation and takedown workflow that reduces harm over time. If your agency keeps seeing the same scam patterns, treat them as campaign clusters, shorten time-to-disruption, and standardize evidence capture so takedowns move faster across partners. For a deeper look at how public sector teams operationalize this approach, explore our government industry overview.

Doppel’s platform is built for continuous external monitoring plus investigation and takedown workflows that help reduce exposure across related scam assets.

Join hundreds of companies already using our platform to protect their brand and people from social engineering attacks.